BibTeX

BibTeX

If you find our work useful, please consider citing:

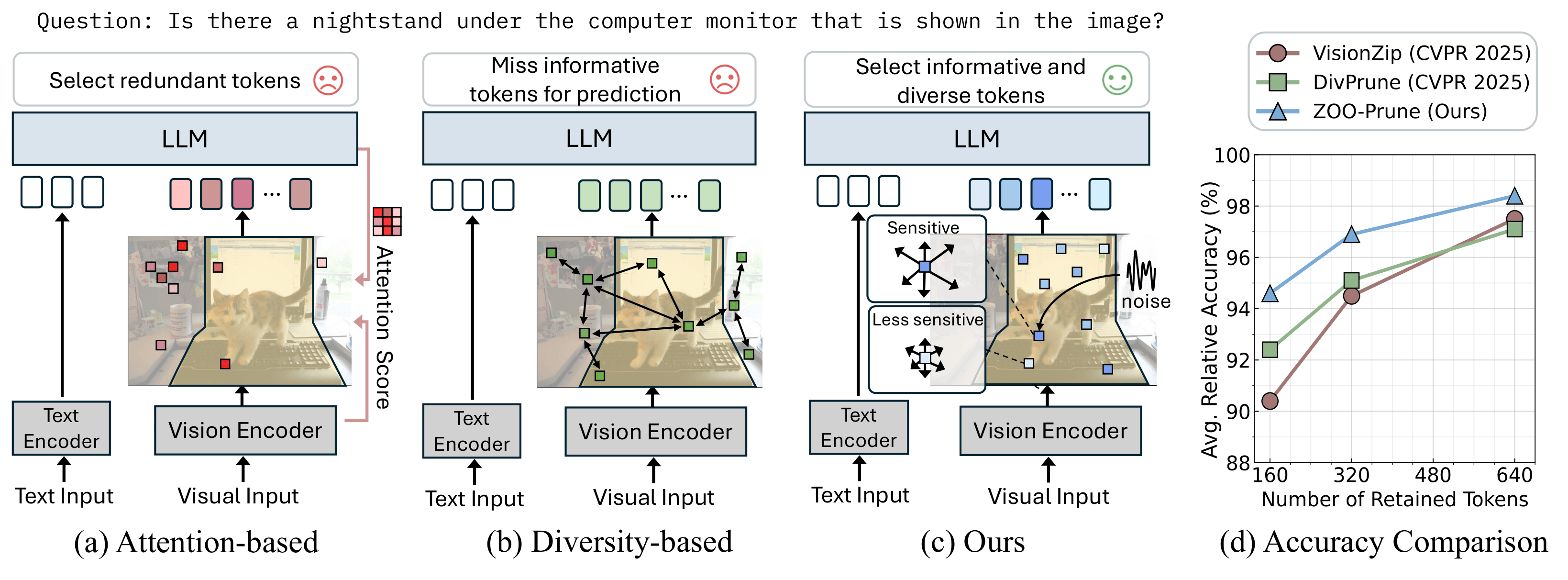

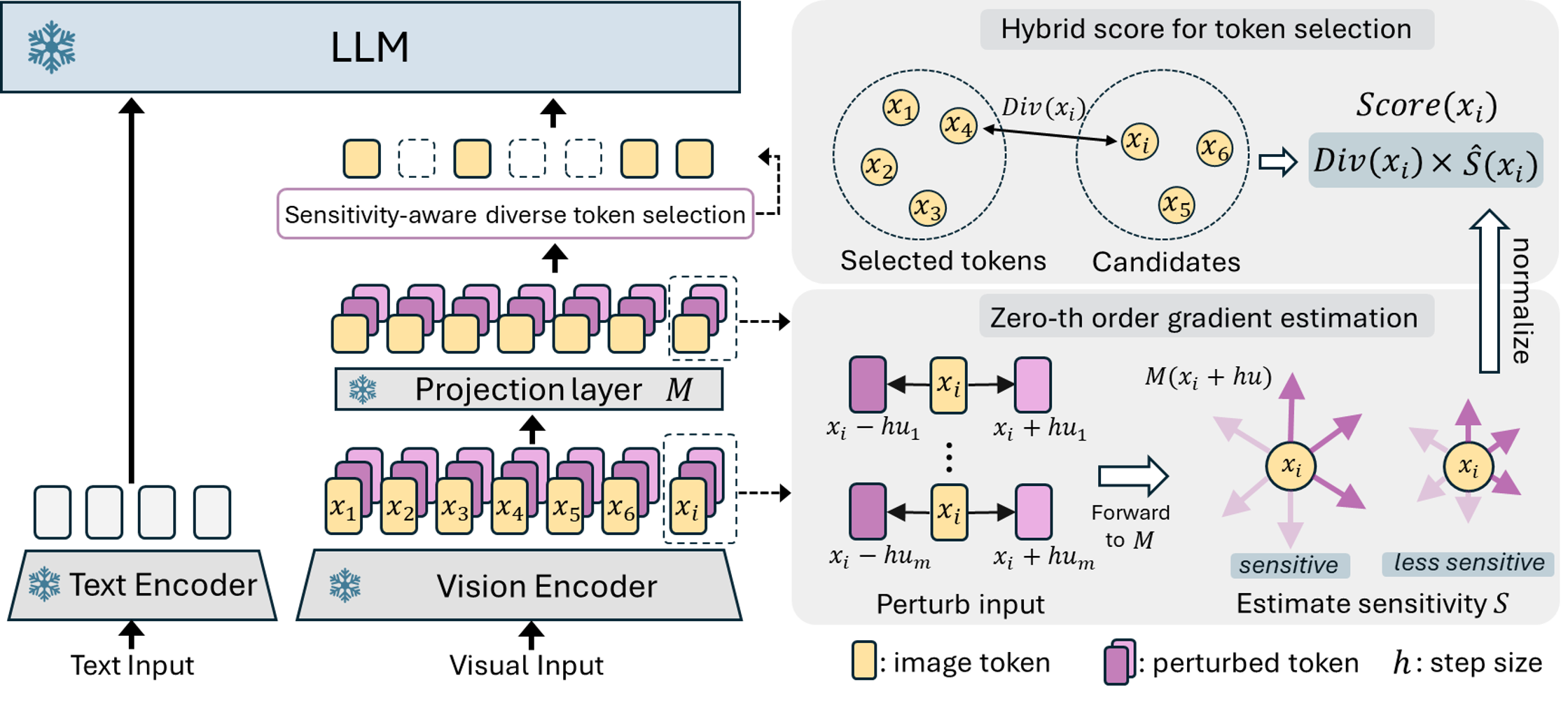

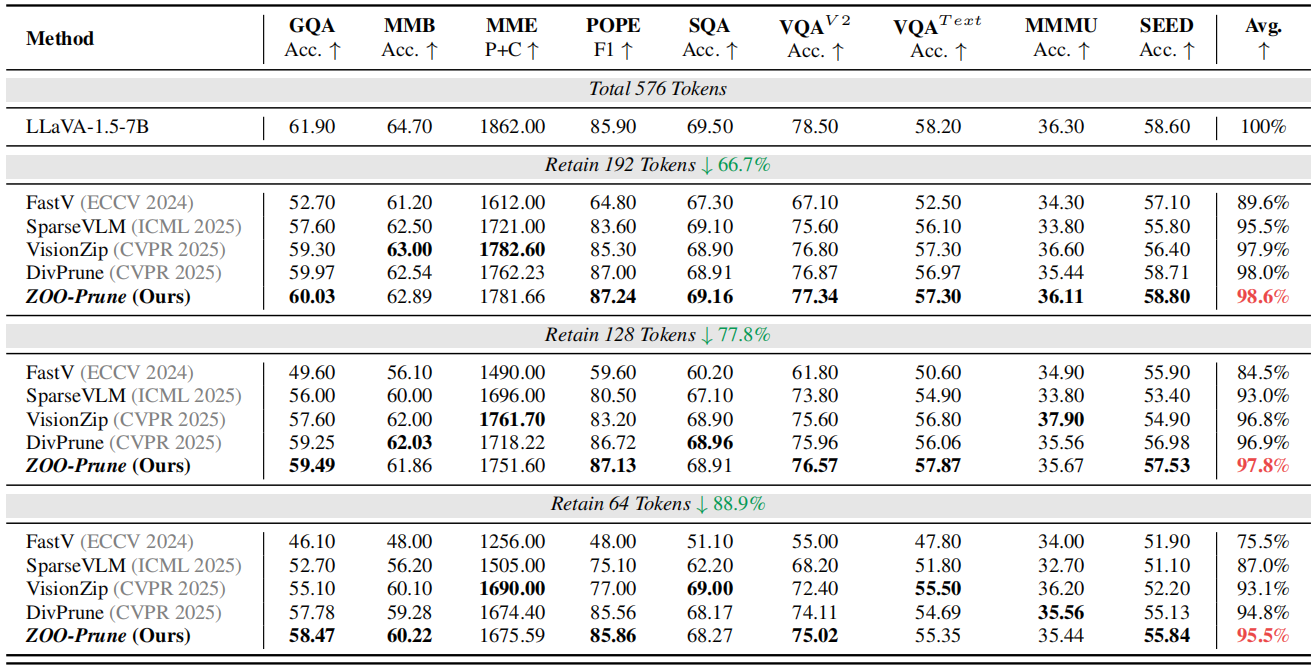

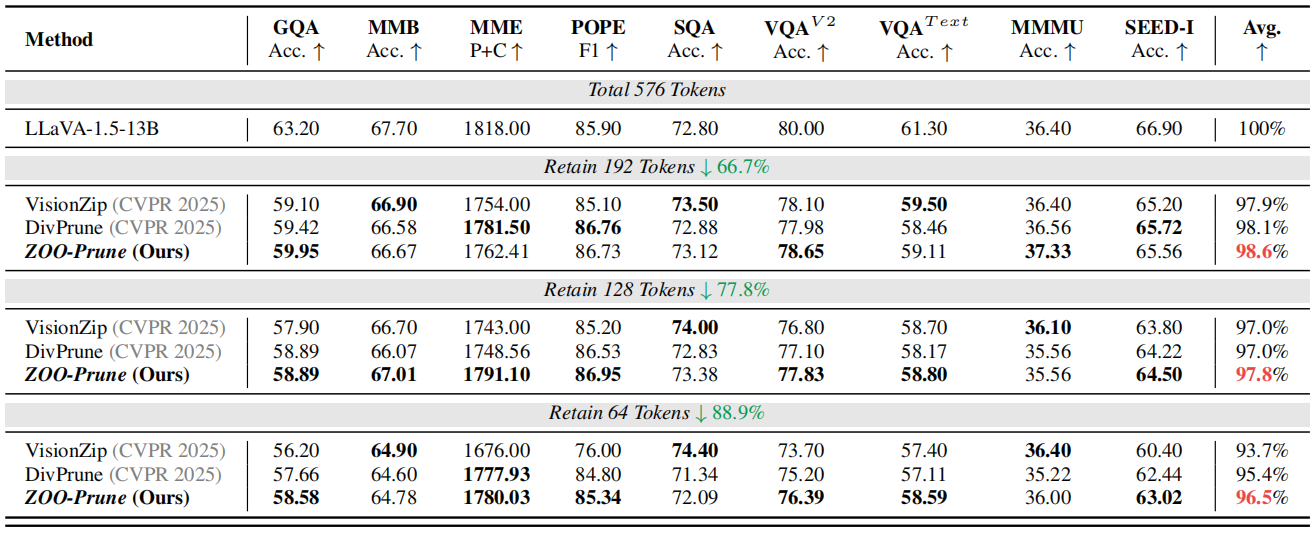

@article{kim2025training,

title={Training-Free Token Pruning via Zeroth-Order Gradient Estimation in Vision-Language Models},

author={Kim, Youngeun and Zhang, Youjia and Liu, Huiling and Jung, Aecheon and Lee, Sunwoo and Hong, Sungeun},

journal={arXiv preprint arXiv:2509.24837},

year={2025}

}